Inside the Peer Review Black Box: What peer reviewers look for and how to make their job easier

Created: 3/2/2026, 11:42:59 AM · Updated: 3/2/2026, 3:29:47 PM

This blog explains what happens to a research paper during the peer review process. Drawing on personal experience as a neuroscience reviewer, the author offers insights that apply across many fields. Reviewers often evaluate a manuscript in stages, beginning with the title and abstract, followed by a quick full read, and finally a detailed assessment. Clear writing, strong figures, sound methodology, and appropriate controls are essential. Because reviewers work under time constraints, they may become skeptical if a paper is difficult to follow. The blog provides practical guidance to help authors present their work more clearly and navigate the review process more effectively.

Your manuscript has been “under review” for 10 weeks now. You refresh your inbox. Nothing. You refresh it again, just in case the fault lies with the internet rather than the journal. Still nothing. At this point, even the spam folder begins to look promising.

You begin to wonder: are the reviewers dismantling it sentence by sentence? The reality is usually far more mundane: long delays are almost always caused by editors struggling to find willing reviewers or reviewers accepting the invitation and then ghosting the journal. However, once your paper lands on a reviewer's desk, much of the outcome is in your hands.

Having reviewed a fair number of experimental neuroscience manuscripts and now working with authors to strengthen their submissions, I can offer some reassurance. Most major revisions stem from avoidable presentation and structural issues, not flawed science. When the science is truly poor, the rejection is usually delivered with admirable efficiency.

Here is a peep inside the black box of peer review and how you can optimize your manuscript for success.

1. The reviewer’s mindset: responsibility-driven skepticism

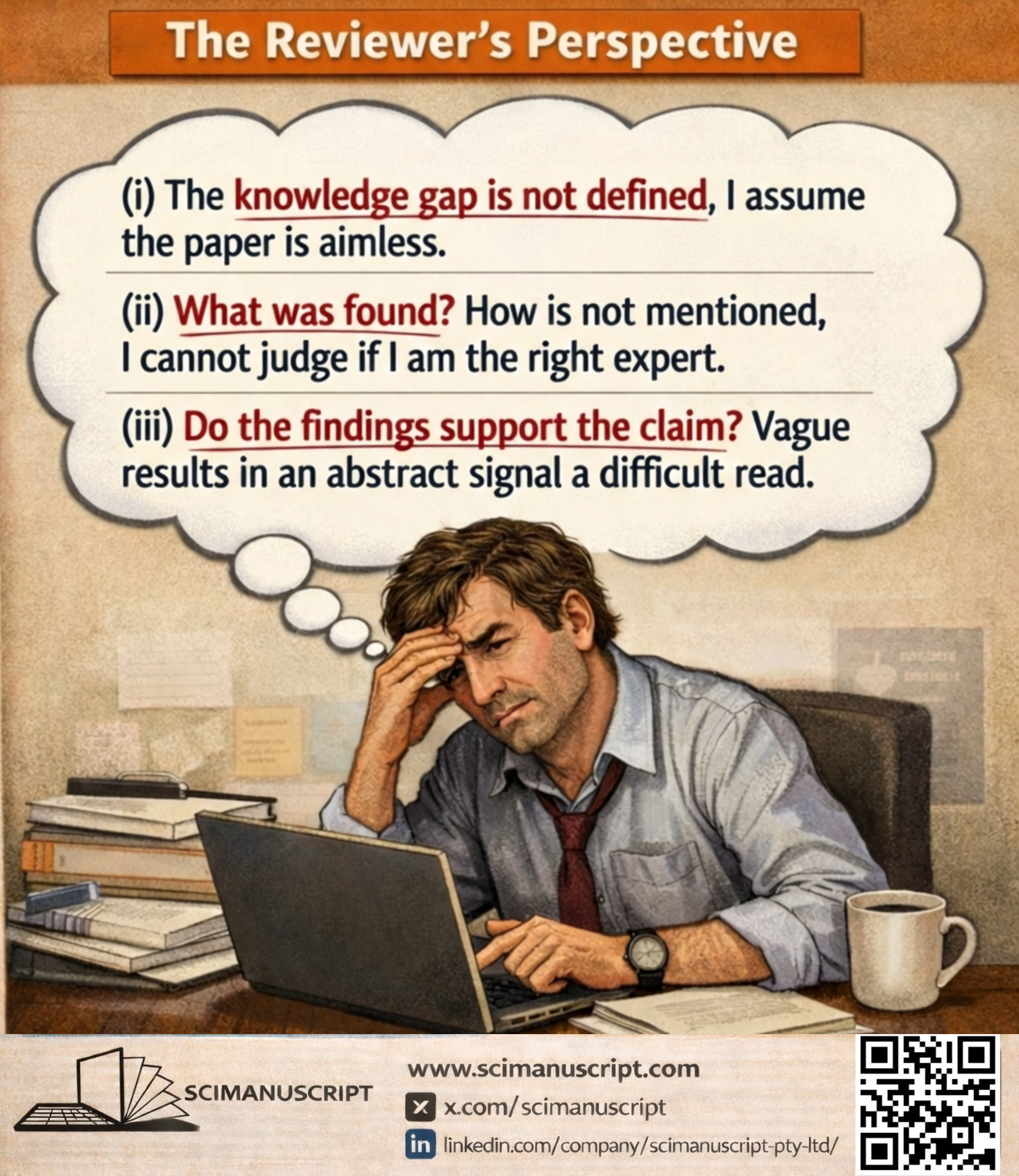

Reviewers operate under severe constraints: they are unpaid, time-poor, and juggling teaching, grants, and their own neglected manuscripts. As reviewers, we are stress-testing your work before it enters the scientific record. The same core questions guided me each time:

- Could the observed effects reflect experimental artifacts (e.g., movement artifact, drug-induced sedation) rather than genuine biological effects?

- Were appropriate controls included (i.e., a vehicle-only group) and properly analyzed?

- Do the trends, effect sizes, and figure-level consistency tell a coherent story, or are we leaning too heavily on p-values? My postdoctoral advisor often remarked, “Pratap, trends and overall patterns matter more; p-values are thresholds, not definitive proof.”

- Is the scope appropriate? (Note: If your paper completely misses the journal's scope or fails basic formatting, the editor will likely desk-reject it before a reviewer ever sees it.)

2. How reviewers actually read your paper: a step-by-step account

Reviewing is rarely linear. It unfolds in three distinct phases. Understanding these phases may allow you to anticipate where a reviewer might lose the thread of your argument.

Phase-1: The invitation (why I agreed or declined): Editors often invite reviewers on the basis of the title and abstract alone. A strong abstract that answers, plainly and convincingly, “So what?” is far more likely to secure a prompt acceptance. A vague or overhyped abstract may struggle to attract reviewers or in some cases, invite desk rejection. Thus, the abstract matters more than many authors appreciate.

Phase-2: The quick read (where first impressions form): I would begin with a single uninterrupted read-through to gauge originality, relevance, and visual support from the figures. This is where first impressions lock in. If a reviewer feels confused here, it breeds skepticism.

Tip: Ensure your transitions are logical and your central thesis isn't buried on page four. If a reviewer has to constantly flip back and forth to understand your narrative, irritation builds up. Clarity generates goodwill.

Phase-3: The deep dive (systematic evaluation): I would then proceed section by section, adding detailed comments.

- Introduction: Is the knowledge gap genuine? Is the hypothesis logically derived, setting up a compelling narrative that builds to the strongest data?

- Methods: For me, this was often the decisive section. I checked for precision (e.g., electrode thickness, cut-off frequencies, buffer concentrations, cell source, and other sources signalling rigor).

- Results: Are the data presented clearly? Can figures and tables be understood without constant reference to the text? Does the narrative faithfully reflect the data?

- Discussion: Strong discussions do more than restate findings. I appreciated when authors acknowledged limitations and explained how the work advances (or challenges) the field.

- References: Here the reviewer becomes something of a detective. I assessed whether the authors genuinely knew the field. If a recent landmark paper contradicted their findings but was conspicuously absent, it rarely went unnoticed.

Make the deep dive phase easy by ensuring your methods are precise, your figures are self-contained, and your discussion is proportionate to your data.

After this process, I would prepare a detailed report outlining points requiring clarifications or improvements. I was reluctant to request new experiments unless essential controls were missing or the existing data demanded deeper analysis. In short, I tried not to become the 'dreaded Reviewer 2'.

3. Red flags and what impresses reviewers

If you received lengthy (e.g., 8-page) feedback, it usually signals that the reviewer sees potential but requires clarification. These revisions almost always stem from the same fixable presentation issues.

Common “red flags” include:

- Missing critical details in methodology.

- Overreliance on p-value without interpreting effect sizes.

- Claims in the discussion that far exceed what the data actually justify.

- Figures that require repeated cross-referencing within the text to decode.

- Selective or outdated citations (or citing retracted papers).

- Excessive grammar or typographical errors, which contribute to reviewer fatigue.

Green flags that impress reviewers:

- A narrative that logically frames the hypothesis and seamlessly builds to the strongest data.

- Robust, well-described controls and methods (replicable!).

- Self-explanatory figures that communicate the story visually.

- Honest discussion of limitations and forward-looking implications.

- Broad, up-to-date references demonstrating mastery of the field.

- Clean, precise writing that respects reviewers’ time.

4. Practical steps to improve your manuscript’s chances

If reviewers read skeptically under time pressure and form early impressions, a few practical measures should follow:

- Use limitations strategically: Acknowledge weaknesses that cannot be fixed. Preempting obvious criticism often softens it. Reviewers are less severe when authors demonstrate self-awareness.

- Do not overload the narrative: Including every confirmatory experiment can obscure your central argument. Ensure that all data necessary to evaluate your central claim are included, but resist the temptation to bury the reader under excess.

- Create distance before submission: Set the manuscript aside for a week or two, then reread it. Better still, ask colleagues or mentors to read it as a mock review (a few established researchers I know follow this approach). Structural gaps and conceptual ambiguities are far easier to correct before submission than during a fraught revision process.

- Language polishing during the pause: If English is not your first language or the writing feels a bit stiff, use the time to improve readability. If you used AI tools, reread to ensure meaning has not changed. Also double-check the journal's specific disclosure policy on AI-assisted writing.

5. Final thoughts

Reviewers are generally not trying to be difficult (but exceptions exist). Most take their role seriously: to protect the integrity of the literature while helping sound work reach publication.

While it is true that editors make the final publishing decision, in many high-impact journals, a strongly negative review is difficult to overcome. The most effective strategy, therefore, is not defensiveness but anticipation. Help reviewers say “accept” rather than “major revision” by presenting a frictionless, well-structured manuscript and by addressing any critiques carefully, politely, and thoroughly in your response letter.

About the Author: Anupratap Tomar serves as Head of the Neuroscience Editing Team at SciManuscript. The views expressed here are based on his experience reviewing manuscripts for peer-reviewed journals

Connect with SciManuscript:

X: https://x.com/scimanuscript

LinkedIn: https://www.linkedin.com/company/scimanuscript-pty-ltd